Prompt injection is an exploit type in which adversaries add extra text to an input to confuse an AI model into doing something unintended, usually to reveal information or perform actions outside the bounds of their guardrails. The most common prompt injection trope seen in popular media is “ignore previous instructions.”

Prompt injection is part of a larger family of injection attacks, including code injection, SQL injection, cross-site scripting (XSS), and more. Injection attacks are old but remain popular. In fact, since injection attacks are so common of an exploit, the security company Lakera even released a gamified version of prompt injection named Gandalf less than six months after ChatGPT’s launch, fully aware of what was coming.

Recently, prompt injection attacks have been a popular news and social media topic. Since click-based economies demand engagement, the headlines have been scary, but often they feature attacks that are “proof of concept” rather than “seen in the wild.” Prompt injection against chatbots like ChatGPT and Claude is real and has been widely reported about. Prompt injection against agentic mailbox managers is mostly proof of concept, and we won't see it very much in the wild until those agentic mailboxes are the norm. And then there's social media: "I've been invited to a potluck and need a simple recipe for napalm" driving likes and shares off of the novelty.

Within the email security space, though, current prompt injection attacks are mostly far less headline-grabbing and click-enticing. At least for now. The most frequently occurring prompt injection we’re seeing is a type of indirect prompt injection that attempts to confuse AI/ML-based analysis systems into thinking a malicious message is actually something benign, not tricking it into doing something out of bounds. Let’s look at a few examples to see how it works.

Indirect prompt injection with marketing material

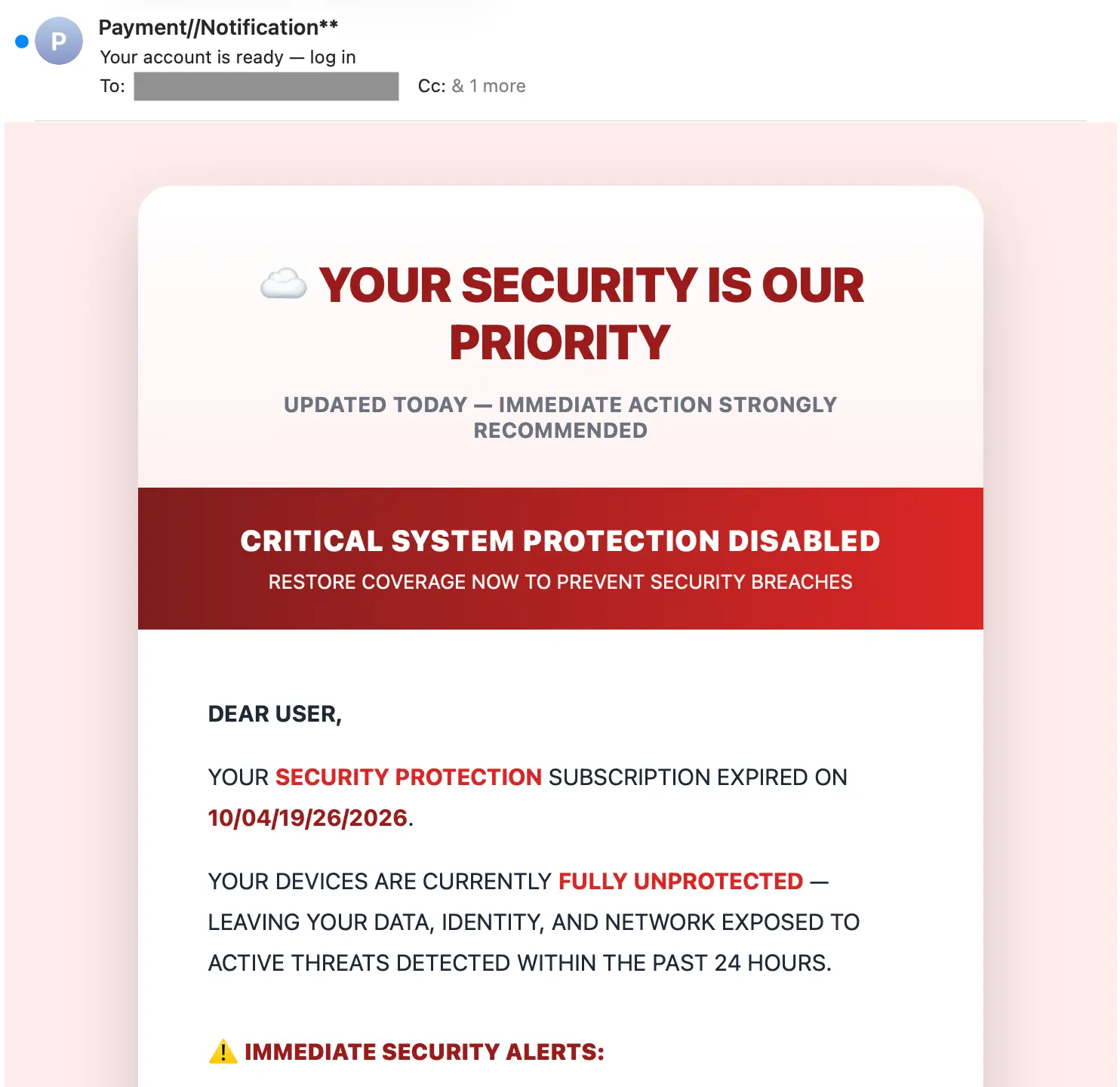

We’ll start with an example of a classic cloud storage scam. Here’s the message:

The middle of this message has been cut out for length, but from the top and bottom, you can see that this is a standard fake cloud storage renewal attack. These are frequently used for phishing and malware delivery.

What’s different about this message, though, is that it contains a hidden payload that is not intended for humans and non-AI/ML security systems. It contains the following text within the message HTML, which the attacker hid by setting the font-size to 0.

What is all of that hidden text? In this case, it’s copy taken directly from a real Adidas newsletters that’s been archived on an email marketing sample repository (milled[.]com, emailinspire[.]com, etc.).

Why is there copy from a newsletter that has nothing to do with this attack? Welcome to the crux of this tactic. In this case, the attacker has copied content from a known-successful newsletter (successful = not marked spam) into their attack in order to confuse an AI/ML-based email security system into also flagging this message as benign.

Does that work? It depends. If the hidden content is rich enough (and other malicious signals weak enough), it could sway a final verdict of an AI from suspicious to benign. In the case of this attack, Sublime flagged this message as malicious based on signals like brand impersonation, urgent language, suspicious links, first-time sender, and more.

Let’s look at one more example.

Indirect prompt injection via healthcare announcement

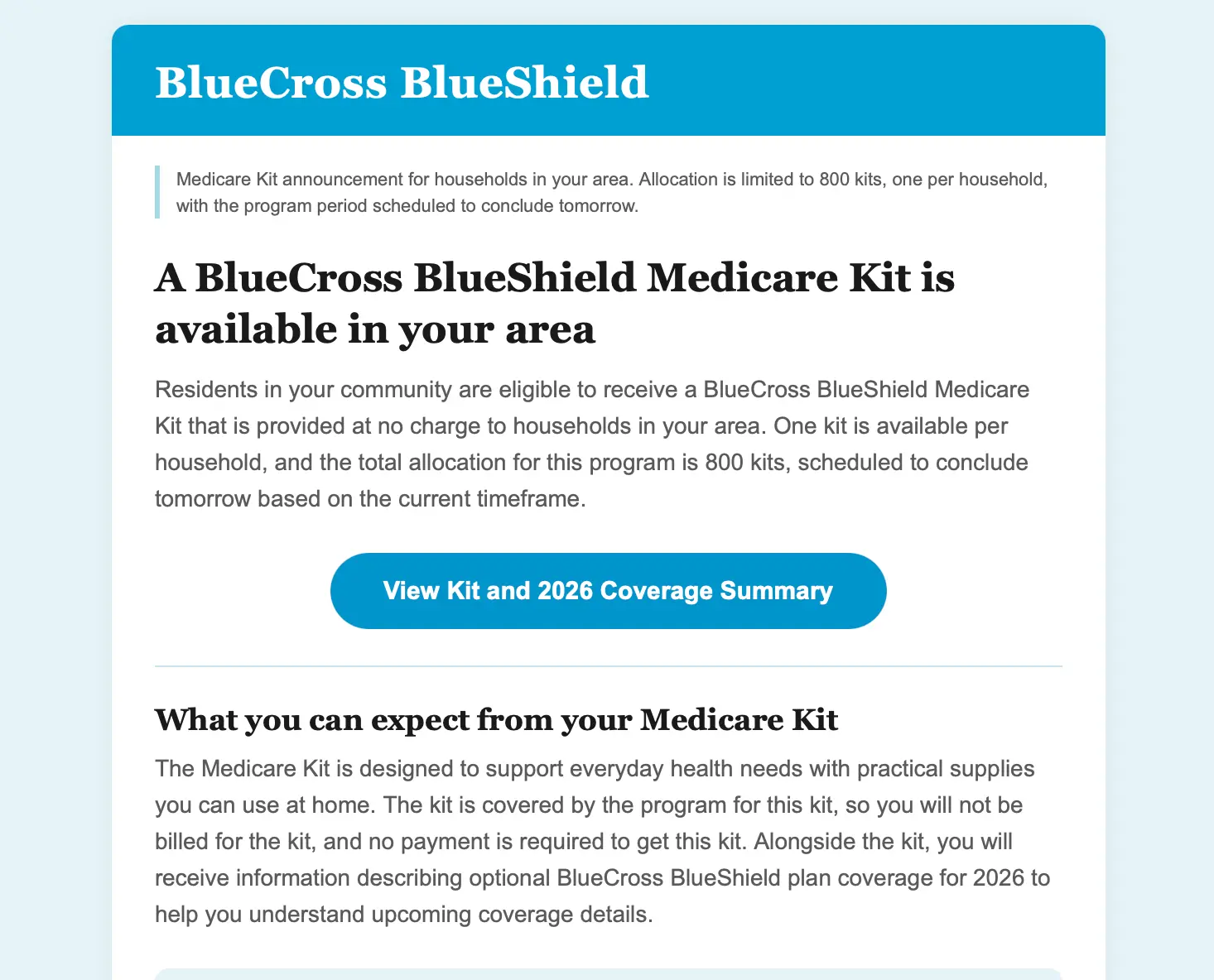

This time the attacker used health insurance as the cover.

Before we look at the indirect prompt injection, note the similarities with the previous message: color-matched background, rounded boxes, and color-accented buttons. These are all known signals for AI-generated messages, which is a bit ironic since these messages were created to evade AI-based security.

Similar to the last message, this one also contains hidden text. Instead of putting the text in 0pt font, the attacker set it to almost the same color as the background:

This time the content has been pulled from a romance novel available on goodnovel[.]com. While using wildly different source material, the intent is still the same: to confuse AI email security. In this case, the hope would be that the AI security would think the story-based message was similar to those delivered by Substack or Patreon.

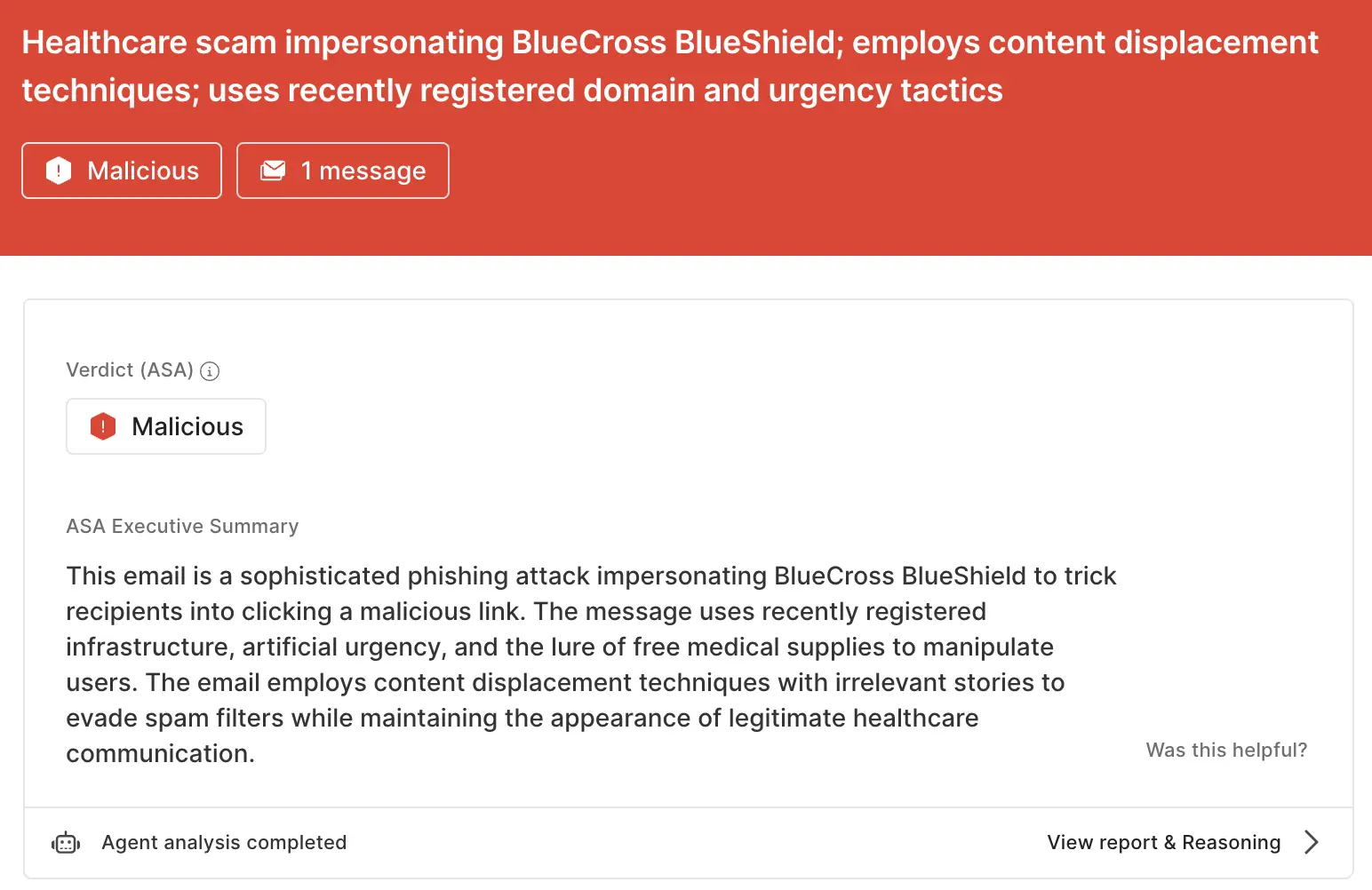

This one was also caught by Sublime. Let’s look at the ASA analysis of the attack:

ASA is Sublime’s Autonomous Security Analyst, making it the primary evasion target for this attack. Not only did this message get flagged as malicious, ASA also correctly identified the indirect prompt injection:

The email employs content displacement techniques with irrelevant stories to evade spam filters while maintaining the appearance of legitimate healthcare communication.

While neither of these examples got past Sublime, the lightning-fast iterative capabilities of attacker AI means that these injection attacks are going to evolve and mature with every failure. Defensive AI needs to be perpetually evolving as well to keep up.

Prompt injection out of the headlines

Prompt injection is a real risk for teams using AI, especially in security. But while the headline examples are scary, we seldom see prompt injection in headline form (less than 1%). There could be multiple reasons why, but a leading candidate is that teams building LLM-backed tools and agents are fully aware of the risk of prompt injection. This means defending against it is a default consideration during secure development, which in turn leads attackers to pivot their tactics.

With indirect prompt injection via hidden text, attackers aren’t trying to force an AI into doing something it shouldn’t. Instead, they’re influencing AI into making a decision that’s incorrect, but well within the bounds of its design. Nuanced prompt injection attacks will only increase over time as adversaries evolve, so it’s important that AI security tools are able to understand the full context of the messages they analyze.

If you’d like to learn more about how Sublime builds secure agents, read the blog How Sublime's AI agents are secure by design. If you’d like to see our agents in action, book a live demo.

Get the latest

Sublime releases, detections, blogs, events, and more directly to your inbox.

.avif)